Photo by David Travis via Unsplash

A-B Testing Method

A/B testing is a research technique that allows you to evaluate the respective effectiveness of two variants of a webpage or product, relative to a clearly defined and measurable goal. To conduct an A/B test, split incoming traffic into two equal parts, and then show one half of these visitors version A, and the other half version B. At the end of the experiment, check which version of the design resulted in a higher incidence of the targeted action, for example, registration, purchase or page views. This shows you which page is more effecive for achieving these goals.

A/B is inexpensive and relatively easy to set up and run, but it is important to understand it's limitations. It provides reliable data only on fully implemented designs with minor variations, only if there is a sufficient amount of traffic to the pages in question, and only if the goals in question can be measured by a computer.

Preparation

A-B Testing is often more effective when it is informed by these complementary methods.

Steps

- Formulate a hypothesis

To begin, decide which assumptions about your design you will test. This should be informed by your other research and discovery efforts. Maybe you want to test whether or not your related items promotions are sufficiently visible, or if potential customers have sufficient information about your product when you present them with a "buy now" button. - Identify the target metric

Once you've decided what hypothesis to test, establish how you'll meausre it. Lower bounce rate? More clickthroughs on key CTAs? Make sure that your metrics are something you can track with your analytics and testing tools. - Select one test item

This can be anything on the page or product, but it should only be one thing. If you have more than one design element you want to test, say button placement and button color, these should be run in two different tests. Otherwise, you will not know which factor influenced your results. - Determind the test sample size

Calculate how many people need to visit your page to produce a statistically significant sample. Sample size is determined by how much of a change you expect to see. The larger the change, the smaller the sample size needed. You can find sample size calculators at AB Tasty, Unbounce, and AB Test Guide. - Determine the test duration

In general, tests should last at lease a week, even if you meet your sample size target before that. This will help account for behavioral differences based on days of the week and weekends. - Run an A/A test

A/A testing is when you show your two groups identical pages and track the results. If the traffic and outcomes are uniform, the results of A/B testing will be reliable. If A/A results are different from one "A" page to the other, there is likely a problem in the way your test is set up. Be sure to correct this before moving on. - Run the A/B test

Once your test is properly scoped and set up, run the test. Tests are generally performed with third party software that distributes page versions and records the resulting metrics. - Analyze and integrate the results

Ideally, your test will provide the data to prove or disprove the hypothesis you formulated in step one. Use this to inform design recommendations, though be careful not to generalize your results: A/B testing provides data only on the element you tested, and provides no basis for explaining why users acted as they did.

Outcomes

A-B Testing typically produces insight and solutions focused on these areas:

-

Navigation Effectiveness

A measure of how effective site or application navigation is relative to business and user goals.

-

User Preference

Elements, arrangements, or qualities of experience design that user state or show are valuable to them.

Resources

Next Steps

-

Category Design

Creating structures and schemes that make the location and use of content clear

-

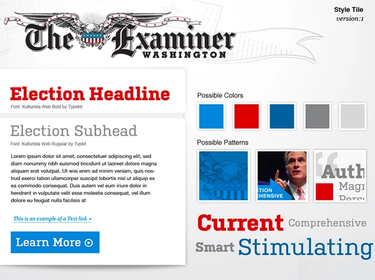

Style Tile Creation

Communicate the use of fonts, colors, and interface elements in a design system

-

Taxonomy Design

Define a system for labeling and classifying content to make it easier to find, understand, and use